Lead scoring is supposed to bring clarity.

It should tell your sales team who to call first. It should help marketing prove pipeline impact. It should align your entire revenue engine around what “qualified” really means.

But in many organizations, lead scoring becomes something else entirely: a legacy system no one fully trusts, rarely updates, and quietly works around.

A flawed lead scoring model doesn’t fail loudly. It fails quietly and gradually. Sales start ignoring MQLs, conversion rates plateau, marketing generates more leads to compensate and revenue efficiency suffers.

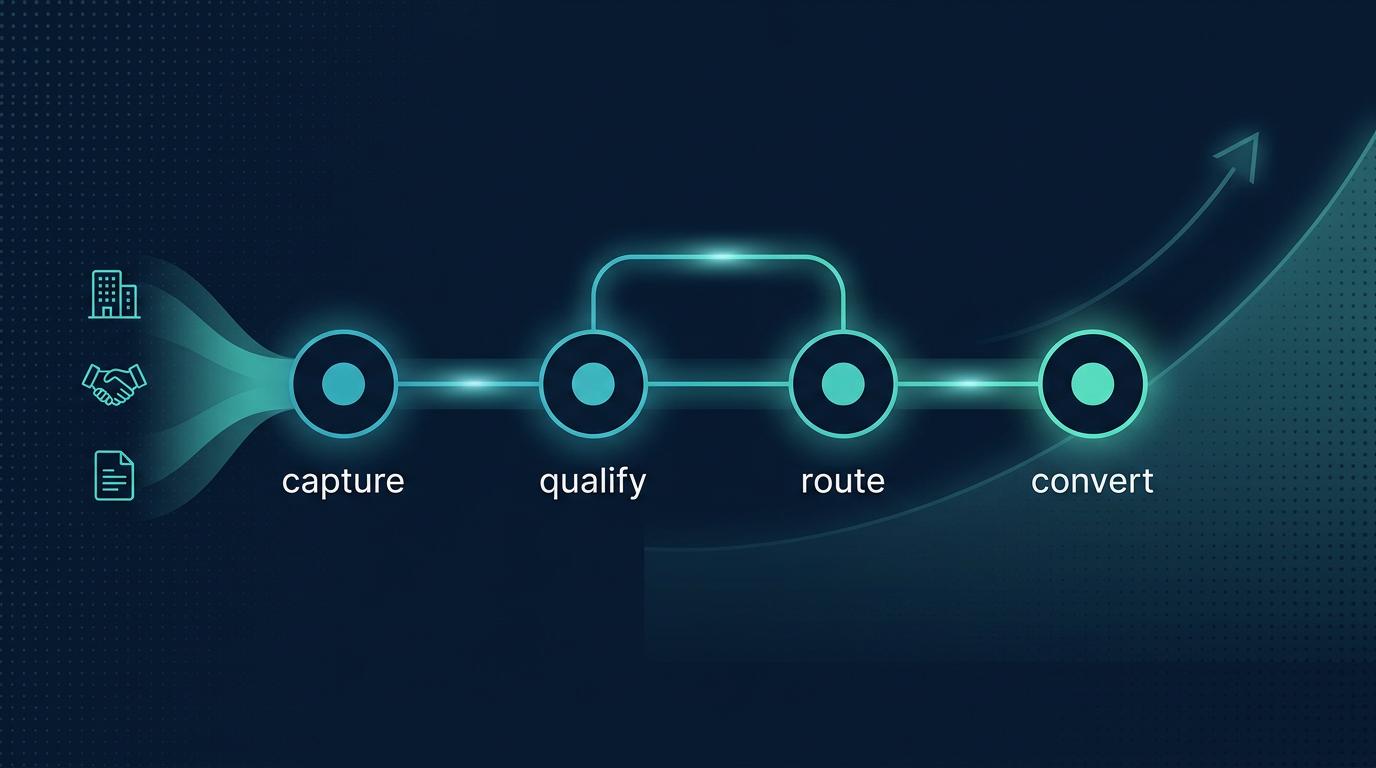

Learn more about Identifying, Qualifying and Activating High Intent Leads

If you’re not sure whether your model is driving growth or holding it back, this diagnostic guide will help you evaluate where you stand and what to do next.

What a Healthy Lead Scoring Model Should Actually Do

Before diagnosing issues, it helps to define success.

A high-performing lead scoring model should:

- Prioritize leads that sales actually want to engage

- Improve MQL to SQL conversion rates

- Align marketing and sales around a shared qualification framework

- Reflect your Ideal Customer Profile (ICP)

- Adapt as buyer behavior and market conditions evolve

- Provide clear, explainable logic behind every score

Most importantly a healthy lead scoring model should correlate with revenue outcomes, not just engagement metrics.

Learn more about The Hidden Costs of Not Having a Lead Scoring System

If your scoring system isn’t delivering these results, some or all of the following red flags may apply.

Signs Your Lead Scoring Model Is Holding You Back

1. Sales Ignore Your MQLs

Symptom: Sales reps cherry-pick leads or bypass marketing-qualified leads entirely.

Why it happens: Scoring criteria are based on surface-level engagement rather than buying intent or fit. For example, email opens, website visits, content downloads.

Impact:

- Declining trust between marketing and sales

- Lower MQL follow-up rates

- Wasted acquisition spend

What to do:

- Run a closed-won analysis.

- Compare high-scoring leads to actual revenue-driving customers.

- Involve sales in redefining qualification thresholds and scoring weights. Lead scoring should be co-owned, not marketing-controlled.

2. Your Conversion Rates Haven’t Improved

Symptom: MQL volume increases, but MQL to SQL conversion remains flat or declines.

Why it happens: The model may be outdated or misaligned with your current ICP (Ideal Customer Profile), product focus, or go-to-market strategy.

Impact:

- Artificially inflated MQL numbers

- Revenue forecasting inaccuracies

- Increased cost per opportunity

What to do:

- Audit historical performance data.

- Identify which attributes and behaviors are present in closed-won deals.

- Adjust scoring weights to reflect real buying signals rather than assumed intent.

3. You’re Overweighting Vanity Metrics

Symptom: Leads earn high scores for low-intent activities.

Examples:

- Opening emails

- Visiting blog posts

- Downloading top-of-funnel content

These signals show interest, not readiness.

Why it happens: Engagement metrics are easy to track, so they dominate scoring frameworks.

Impact:

- Sales engages leads who are still researching

- Pipeline becomes cluttered

- Marketing optimizes for clicks instead of conversions

What to do:

Prioritize high-intent behaviors such as:

- Demo requests

- Pricing page visits

- Product-focused content consumption

- Repeat engagement over short time windows

- Sales touchpoint responses

Scoring should distinguish curiosity from buying intent.

4. Your Model Hasn’t Been Updated in 12+ Months

Symptom: “Set it and forget it” scoring.

Buyer journeys evolve. Product positioning changes. New competitors enter the market. But the scoring model remains untouched.

Why it happens:

- Limited internal bandwidth

- No formal review cadence

- Overreliance on the original build

Impact:

- Scores become disconnected from reality

- High-fit accounts slip through unnoticed

- Revenue teams operate on outdated assumptions

What to do:

Implement quarterly or biannual scoring reviews tied to revenue KPIs. Treat your scoring model as a living system. A scoring system should not be a one-time project.

5. Marketing and Sales Disagree on What “Qualified” Means

Symptom: Ongoing debates about MQL definitions.

Why it happens: Qualification criteria were defined in isolation or never formally documented.

Impact:

- Slow lead follow-up

- Inconsistent pipeline reporting

- Friction between teams

What to do:

Facilitate a structured alignment workshop where you define:

- ICP attributes

- Disqualification criteria

- Buying signals

- Service-level agreements (SLAs)

Then build your scoring logic around this shared definition.

Alignment isn’t a byproduct of scoring, it’s a prerequisite.

6. Your Best Customers Wouldn’t Score Highly

This is one of the most revealing tests.

Pull a sample of your top 20 closed-won customers. Would they have reached MQL threshold based on your current scoring rules?

If not, your model is misaligned with revenue reality.

Why it happens:

The model was built on assumptions instead of historical data analysis.

Impact:

- Attracting and prioritizing the wrong segments

- Missed expansion opportunities

- Lower lifetime value (LTV)

What to do:

Reverse-engineer your scoring model from revenue backward. Identify patterns across:

- Firmographics

- Buying roles

- Engagement timing

- Sales cycle behavior

Then recalibrate scoring to reflect what actually converts.

7. The Model Is Too Complex to Explain

If only one person understands how scoring works, that’s a risk.

Why it happens:

Over-engineered logic, layered automation rules, and years of incremental additions.

Impact:

- Low organizational trust

- Difficulty troubleshooting performance issues

- Fear of making updates

What to do:

Simplify. Document. Rationalize.

A high-performing model should be sophisticated in logic but clear in explanation. If stakeholders can’t understand it, they won’t trust it.

A Quick Lead Scoring Health Check

Use this checklist to evaluate your current system:

- Has our scoring model been updated in the last 6–12 months?

- Do sales consistently accept and act on our MQLs?

- Is scoring criteria tied to revenue data?

- Can we clearly explain how points are assigned?

- Have we analyzed scoring patterns in closed-won vs. closed-lost deals?

- Do we differentiate between engagement and buying intent?

If several of these raise concerns, your model likely needs structured review.

What an Optimized Lead Scoring Model Looks Like

An effective lead scoring framework is:

Data-driven: Built using historical opportunity and revenue data.

Multi-dimensional: Incorporates behavioral, firmographic, and intent signals.

Continuously tested: Reviewed against conversion and revenue KPIs on a regular cadence.

Aligned cross-functionally: Co-owned by marketing, sales, and revenue operations.

Transparent and explainable: Clear enough that stakeholders trust it.

When scoring is optimized, it becomes a growth lever that improves sales productivity, increasing pipeline velocity, and enhancing forecasting accuracy.

Learn more about Lead Qualification Criteria

When to Bring in Expert Support

Sometimes the issue is capacity, rather than awareness.

You may benefit from external expertise if:

- MQL acceptance rates are declining

- Revenue growth has stalled despite strong lead volume

- Sales and marketing alignment is strained

- Your team lacks bandwidth for deep data analysis

- Your martech stack has grown more complex

Lead scoring sits at the intersection of strategy, analytics, and automation. Optimizing it requires both technical precision and revenue alignment.

If you suspect your scoring model may be limiting pipeline performance, contact us and book a Systems Strategy Call.

A structured audit can uncover misalignments, recalibrate scoring logic, and turn your model into a measurable revenue driver.

Lead Scoring Should Drive Revenue - Not Just Reports

A lead scoring model isn’t successful because it exists or because it produces professional looking reports. It’s successful when it improves revenue outcomes.

If your model is:

- Inflating MQL numbers

- Creating friction between teams

- Failing to predict conversions

- Or simply untouched for too long

…it may be holding your growth back.

Lead scoring is one of the most fixable components of your revenue engine. With the right data, alignment, and optimization framework, it can become a powerful lever for predictable pipeline growth.

Revenue efficiency isn’t optional, it’s essential.

FAQ

Q1: How often should you review your lead scoring model?

At least every 6–12 months, or whenever your ICP, product, or sales process changes.

Q2: What’s the biggest mistake in lead scoring?

Overweighting engagement metrics instead of buying intent and revenue data.

Q3: Why do sales teams ignore MQLs?

Because scoring models often prioritize activity over qualification and fit.

Q4: What data should inform lead scoring?

Closed-won analysis, ICP fit, buying signals, and conversion performance data.